MaxToki AI Revolutionizes Cellular Aging Prediction with Temporal Gene Network Insights

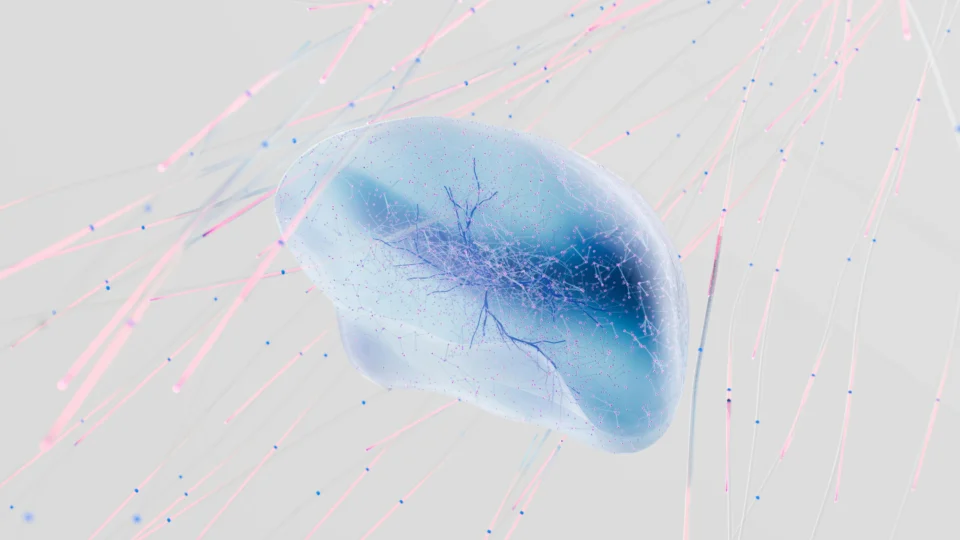

Imagine peering into the future of your own cells, watching as subtle genetic shifts over decades foreshadow the onset of age-related diseases like Alzheimer’s or heart failure. This isn’t science fiction—it’s the promise of MaxToki, a advanced AI model that transforms static snapshots of cellular activity into dynamic forecasts of biological time.

Unveiling MaxToki: Transforming AI in Biological Temporal Modeling

MaxToki represents a significant advancement in artificial intelligence applied to biology, specifically designed to overcome the limitations of traditional models that analyze cells as isolated moments in time. By leveraging transformer decoder architecture—similar to that used in large language models—this AI processes single-cell RNA sequencing data to predict how cells evolve, particularly in the context of aging. The model addresses a critical gap in computational biology: understanding progressive gene network changes that drive chronic diseases over years or decades, rather than reacting to current states alone. Developed through a collaborative effort involving researchers from multiple leading institutions, MaxToki integrates data from diverse sources to model human cellular trajectories across the lifespan. Its implications extend to early detection and intervention in age-related conditions, potentially reshaping preventive medicine by identifying at-risk cellular pathways before symptoms emerge.

Core Architecture and Training Innovations

At its foundation, MaxToki employs a novel rank value encoding for transcriptomes, where genes are ordered by relative expression levels within each cell, scaled against a broad corpus. This approach highlights dynamically variable genes, such as transcription factors, while minimizing noise from housekeeping genes and technical variations like batch effects. The training process unfolds in two stages for enhanced temporal reasoning:

- Stage 1 Pretraining: Utilizes Genecorpus-175M, comprising about 175 million single-cell transcriptomes from 10,795 public datasets across human tissues in health and disease. This excludes malignant or immortalized cells to focus on normal dynamics, with no single tissue exceeding 25% of the data. Training generates roughly 290 billion tokens via an autoregressive objective, predicting the next ranked gene. Performance scaled as a power law with parameter count, justifying variants of 217 million and 1 billion parameters.

- Stage 2 Extension: Expands context length from 4,096 to 16,384 tokens using Rotary Positional Embeddings (RoPE) scaling. It trains on Genecorpus-Aging-22M, including 22 million transcriptomes from approximately 600 human cell types across 3,800 donors from birth to over 90 years, balanced by gender (49% male, 51% female), yielding 650 billion tokens. Overall, MaxToki processes nearly 1 trillion gene tokens.

Infrastructure optimizations, including FlashAttention-2 on NVIDIA’s BioNeMo framework with mixed-precision training (bf16), achieved a 5x throughput boost and 4x larger micro-batch sizes on H100 GPUs. Inference speeds improved over 400x with key-value caching. Interpretability studies on the smaller variant revealed that about half of the attention heads autonomously prioritized transcription factors—key regulators of cell transitions—without explicit labels. Ablation tests confirmed the model’s reliance on both context trajectories and query cells, with relative gene ordering essential for accuracy. Notably, 95% of generated transcriptomes mimicked single cells rather than averages, avoiding a common generative model pitfall.

Temporal Prompting and Disease Acceleration Insights

MaxToki’s prompting strategy enables in-context learning without explicit cell type or gender labels. A prompt includes a trajectory of two or three cell states with timelapses, followed by a query for one of two tasks:

- Predicting the months required to reach a query cell from the context.

- Generating the transcriptome after a specified timelapse.

- In heavy smokers’ lung mucosal epithelial cells, it detects about 5 years of acceleration versus non-smokers, aligning with known telomere shortening effects.

- Pulmonary fibrosis lung fibroblasts show roughly 15 years acceleration, reflecting senescence and attrition.

- Alzheimer’s microglia exhibit 3 years acceleration in full disease cases from Mount Sinai and replicated cohorts, but not in mild cognitive impairment or resilient patients, highlighting potential protective mechanisms.

For time predictions, continuous numerical tokenization with mean-squared error loss outperformed categorical methods, yielding a median error of 87 months—far below the 178 months of a linear baseline or 180 months of naive age assumptions. It achieves a Pearson correlation of 0.85 on unseen cell type trajectories and 0.77 on held-out donor ages, demonstrating robust generalization. In disease contexts, despite training solely on healthy data, MaxToki infers age acceleration by comparing disease cells to normal trajectories:

These findings suggest MaxToki could screen for novel aging drivers; for instance, it identified pro-aging factors in cardiac cells, validated to induce dysregulation in iPSC-derived cardiomyocytes and dysfunction in mice within six weeks. With models and code publicly released, it invites community extensions for specific diseases or tissues. As longitudinal single-cell data proliferates, tools like MaxToki could standardize early interventions, though uncertainties remain in extrapolating to extreme ages or rare conditions not represented in training corpora. How do you envision AI models like MaxToki influencing personalized medicine and longevity research in the coming years?

Fact Check

- MaxToki, a transformer-based model in 217 million and 1 billion parameter sizes, trains on nearly 1 trillion gene tokens from healthy human single-cell RNA data across life stages.

- Stage 1 uses 175 million transcriptomes from 10,795 datasets, excluding diseased or immortalized cells, with autoregressive prediction of ranked gene expressions.

- Temporal prompting yields 87-month median age prediction errors and 0.85 correlation on unseen cell types, generalizing to infer 3-15 years of age acceleration in smoking, fibrosis, and Alzheimer’s cases.

- Optimizations like FlashAttention-2 provide 5x training speedups on H100 GPUs, with 95% of outputs classified as single-cell transcriptomes.

- Interpretability shows self-learned focus on transcription factors, enabling novel driver identification validated in cardiac models.